Also, I think it would be really cool to integrate a simple realtime html editor/viewer into the question. This might be a good candidate: https://github.com/tomhodgins/liveeditor

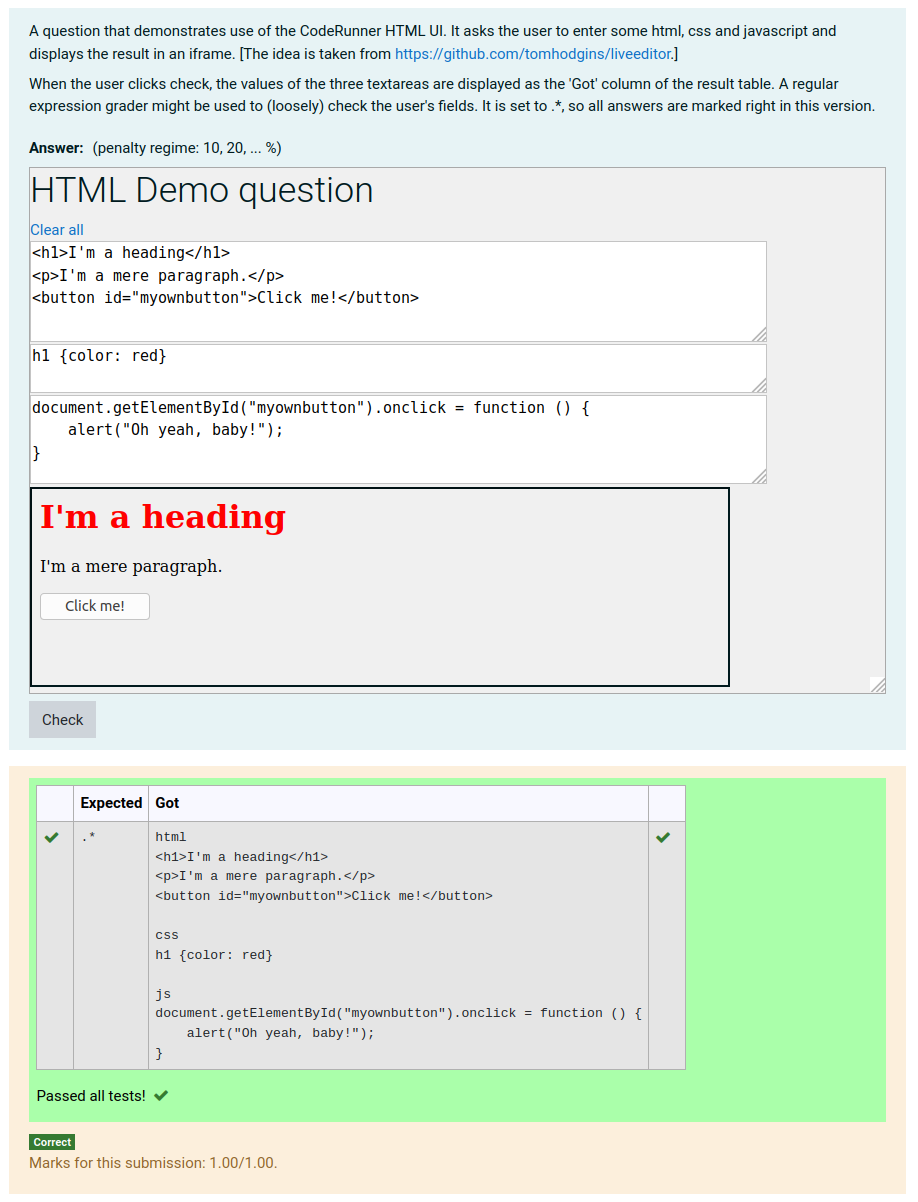

To take your second point first - the HTML UI can be used to display the tomhodgins live editor out-of-the-box. Just paste in his .js file to the global extra. And if you then strip out the use of web storage and add a suitable name attribute plus the required class 'coderunner-ui-element' to all text areas, you have a question type that passes you back the HTML, CSS and JavaScript ready for grading. Here's what it looks like (xml export attached):

You'll see I rewrote the JavaScript in my own baroque fashion to make it more readable, because I was playing with it as a potential demo of the HTML-UI.

The challenge is to (a) specify exactly what you want the student to do and (b) mark their submission in a meaningful way. The question text suggests using regular expressions, but that's at best an indicative grading since there are lots of ways of producing a specified outcome.

There is a thread on using regular expressions to grade HTML here, though it probably doesn't add much. And another thread here that draws attention to an issue that might occur if you use questions like the above in a quiz that presents the students with your answer when they review the result (due to duplicated HTML element IDs).

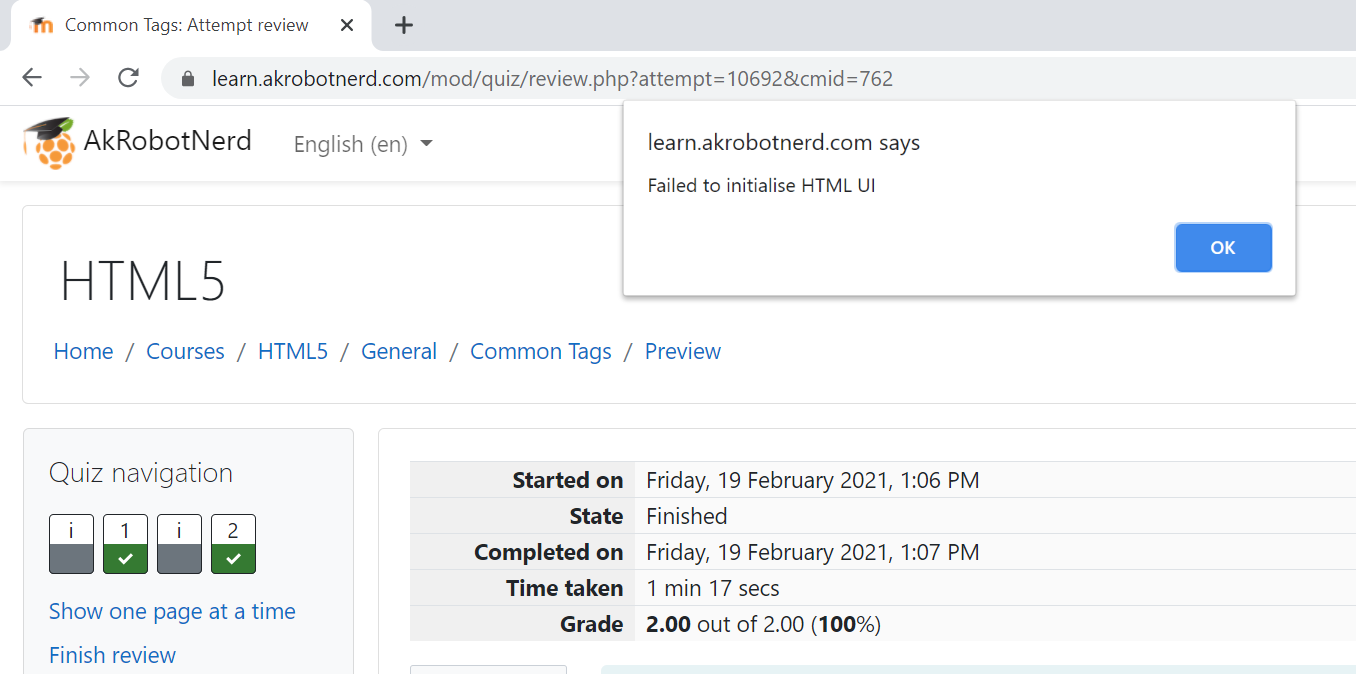

Based on your response to Khen and to me, I created an html_via_python question prototype that works with the live editor. However, when I submit all and finish a quiz, I get the following alert:

Not sure what to do about it. I've attached my most recent question prototype and the quiz questions.

Also, I validate by matching tags and by using the data between tags as an example of what students should include (I actually force them to come up with something different from my example). I foresee a time when I would like students to either match what I have exactly, or match a list of options I give them. Any idea on how I could go about achieving that functionality while still maintaining the ability to provide suggestions for the data in my feedback that are based on the answer? My goal is to increase engagement by allowing students to respond in creative or unique ways while providing useful feedback.

Last but not least, I'm planning to sink some time into developing a better HTM_via_python question prototype as well as more questions. Any chance you're willing to create a repository for such questions to facilitate sharing?

This is coming along nicely. But the error you're getting is because your sample answer (QUESTION.answer) is invalid, as you'll see if you preview the question and click Fill in correct response. That's why you only see it at the end of the quiz, when reviewing it and displaying the author's answers.

This is a bit subtle. To quote from the documentation of the HTML_UI

When authoring a question that uses the html UI, the answer and answer preload fields are not controlled by the UI, but are displayed as raw text. sections of the authoring form. If data is to be entered into these fields, it must be of the form

{"<fieldName>": "<fieldValueList>",...}

where fieldValueList is a list of all the values to be assigned to the fields with the given name, in document order. For complex UIs it is easiest to turn off validate on save, save the question, preview it, enter the right answers into all fields, type CTRL-ALT-M to switch off the UI and expose the serialisation, then copy that serialisation back into the author form.

So in your case the correct answer for your question h2 should be something like

{"crui_html":["<h1>Donald Duck</h1>\n<h2>Topic 1</h2>\n<h2>Topic 2</h2>"]}

However, you'll have to change your template code to correctly process the sample answer in exactly the same way as you process the student answer.

While looking through the code again I see I didn't adequately deal with multiline answers. Rather than

student_answer = json.loads("""{{ STUDENT_ANSWER | e('py') }}""")

it should be

student_answer = json.loads(r"""{{ STUDENT_ANSWER }}""")

where I've used a raw string to prevent the embedded '\n' from being converted to a newline, and dispensed with the python escaper as it shouldn't be possible to get triple quotes in a JSON string (AFAIK?).

When fixing your template you'll need to treat the sample answer in the same way.

Richard

I made the changes you suggested. No more alerts. Thanks. However, I did notice that when reviewing the quiz, the live editor is still live. Any ideas on a way to kill it when it's not embedded in a question that hasn't yet been checked. I'm wondering if the JavaScript could somehow pick up on the status of the question and enable/disable the textarea. Is there something specific I could detect in the HTML UI that's only present when the question is unanswered that I could use?

Another thought. I can see that entering the raw HTML into the answer box is much more author friendly than entering the serialisation required by the HTML UI. So you could either:

- Set the quiz setting to not show your answer to the students (which would also prevent the alerts) or

- Put the raw HTML answer into the Extra field instead.

When reviewing a quiz, the answer text areas are readonly. So the JavaScript should check the original textarea and, if it's readonly, mark its own new textarea readonly too. It should also turn off event handling.

Finding the original text area is slightly tricky in the current master branch of CodeRunner. The development version has a ___textareaId___ macro (see here) which makes it easier. This should be pushed to master by the end of this month.

Using that macro, a modified version of your globalextra HTML would be something like the following (which seems to work OK, though with minimal testing:

<script>

// Based on https://github.com/tomhodgins/liveeditor.

$(document).ready(function() {

var doc = $(document),

html = $(document.getElementById('crui_html___textareaId___')),

ta = $(document.getElementById("___textareaId___")),

iframedoc = document.getElementById("preview___textareaId___").contentWindow.document;

function show_preview() {

iframedoc.write("<head><\/head><body>" + html.val() + "<\/body>");

iframedoc.close();

}

show_preview();

if (ta.is('[readonly]')) {

html.prop('readonly', true);

} else {

doc.keyup(show_preview);

}

});

</script>

<textarea id="crui_html___textareaId___" rows="5" style="width: 100%; max-width: 100%;" value="" class="coderunner-ui-element" name='crui_html' spellcheck="false" placeholder="HTML" autocapitalize="off" autofocus

style="font-family: monospace"></textarea>

<br>

<iframe id="preview___textareaId___" style="width: 100%; max-width: 100%;" height="200"></iframe>

In case you're wondering: I've had to use document.getElementById rather than a simple JQuery $("#...") syntax because the full textareaId has characters (I think colons) that are interpreted by jquery as selector syntax, not part of the ID.

Taking your second point about creating a repository for sharing questions ... I'm happy to create another "course" on this server, like the various other repo courses (C/C++, Python, Java etc) if that's what you mean. But this approach hasn't proved all that useful as a way of sharing questions. As you'll see from the various forum postings different teachers have wildly different needs and I've come to the conclusion that generally teachers have to write their own questions to fit in with their own particular curriculum, teaching style, language etc.

However, if you want me to create an "HTML" course, just say.

And I'm open to other suggestions on how to share questions among the coderunner community.

So, I've got my questions that use a parser and look for matches working pretty well. Now I'm trying to run code through a validator. Here's what I've got so far:

import subprocess, sys

student_answer = """{{ QUESTION.answer }}"""

with open("page.html", "w") as src:

print(student_answer, file=src)

subprocess.call(["java", "-jar", "/usr/share/java/vnu.jar","page.html"])

Unfortunately, I'm getting the following error message when I test the question:

Error occurred during initialization of VM Could not reserve enough space for 251904KB object heap

I know you use python's subprocess a lot. Have you ever run into this error? Have any ideas on how I should handle it?

Just increase the memory limit for the question. It's under Customise > Advanced Customisation > Memory Limit (MB). Or just set it to 0 to turn off the memory limit altogether (not safe when running student code but in this case you're not).